Recurse Center: End of W3

I meant to write this last week. A few things I learned from running some experiments for data distributed training of AI models while practicing VIM along the way:

Without any other optimization, it was 45% faster to train with 2 gpus on 1 node than with just 1 gpu (and quality of results still the same). It probably is not a 2x speed up since there are some overheads from communication, such as the gradient synchronization between GPUs before the weight update of each iteration.

Because the model gets to see twice as much data per batch, the simplest way to account for it is to increase the learning rate by 2 because the model is now more certain with each batch and there is effectively less noise. Alternatively, I could halve the batch size but that also changes the number of optimizer updates (and the training dynamics) for the same number of epochs, which can make benchmarks less directly comparable.

I tried to increase CPU utilization so as to increase GPU utilization as well. On 1 gpu, by increasing number of workers from 8 to 32, this increased both CPU and GPU utilization by 25% , and decreased time taken to train by 25%. Time taken to train saturates with 60 workers and might have saturated way before 60 workers as there is now excessive overhead (I was working with 60 physical cores).

I noticed that training on 1 GPU was way faster on my local GPU than just renting the equivalent 1 GPU on runpod. Which led me down a rabbit hole of trying to attribute the difference. And I was able to trace it down to:

a good amount of it being that on runpod, I had used a network volume to store my data which means there is a network cost to move data to my dataloader for preprocessing. This was quickly fixed when I requested a very large local storage on runpod.

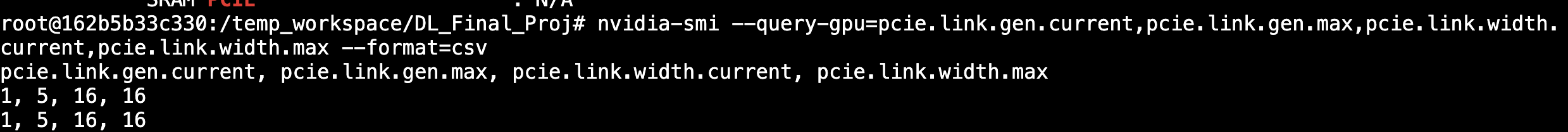

but..there was another huge amount of time that was unexplained even with the exact same set up otherwise. And that was when I discovered that I was getting a shitty PCIe cable on runpod (the physical pins that connect CPU to GPU, that determines latency and bandwidth). It was using a gen1 PCIe when it could be supporting up to a gen5 PCIe connection which is what I am using for my local GPU and supports 16x more bandwidth. Is this normal? I almost wanted to rent some AWS GPUs to check that as well.

Finally, with optimization (i.e. increasing from 8 workers to 16 workers) and using 2 GPUs on the same node, it was 54% faster than baseline.

A bigger conclusion though is that while using more GPUs did train more quickly, I really did not need to do that (at least for this current model) because my GPU utilization rate hovered around 30%.